The other day at church, someone asked me if I thought AI was a fad. I was caught off guard. I could tell the person was probably not a fan of AI, and I had to be up front to play the piano in about 30 seconds. So I rattled off a quick answer that probably didn’t land and I headed off to play. It was a quick conversation, but it got me thinking about how people’s opinions of AI are the result of them reacting to a very narrow slice of what they’ve personally experienced.

If I had it to do over again with more time to respond, I would have started by asking what they meant by “AI.” Everyone means something different when they say it and even when they define it, it’s still easy to misunderstand them. In practice, many people mean “the free chatbot I tried.” If that’s your only exposure, I completely understand why you might decide that AI isn’t very impressive.

The free versions of Copilot and ChatGPT might not amaze you, but for coders, it’s incredible. Programming languages are a perfect playground for LLMs because the language is perfectly defined and there are countless examples for it to train on. But for other professions where AI isn’t as good (yet), it can be harder to see the value.

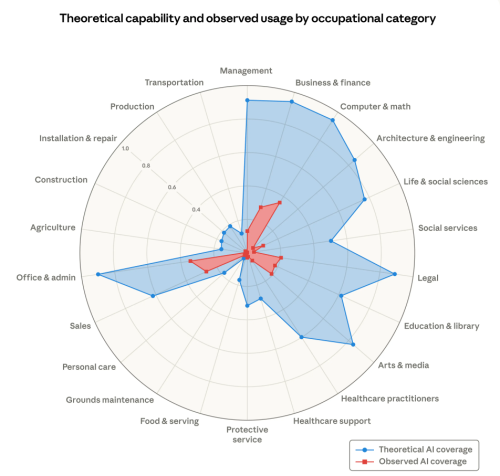

Anthropic (the company behind some of the most impressive AI models) recently published a paper on the labor impacts of AI.

Note that the red area is the impact that is already happening and the blue is the theoretical impact. I don’t know how they judge the theoretical upper bound, but the chart is a helpful way to frame the conversation. Will AI impact all areas of life? Yes. Will it have the same level of impact on all areas? Not even close.

The other big takeaway that is hard to grasp is that we ain’t seen nothing yet. So many areas are going to see enormous growth. For example, someone DNA sequenced their dog, developed a custom cancer vaccine, and cured their dog. It will take a while for this kind of thing to translate to humans, but I think we’ll look back on the next decade and see a noticeable increase in expected lifespan. It’s so much easier to zero in on plausible new drugs for trials when we can feed an agent tons of existing research, all the existing legislation around what can be done, and a series of desired outcomes. We’ve seen similar paths to this at work where we are researching new datacenter technology for physical tasks like improved heat dissipation.

In my area of computer programming, the change has been indescribably large. I’m blessed to have unlimited access to the best models on the planet regardless of cost, and my work life is completely different from what it was even six months ago. I have barely typed a line of code in the last three months, but I have produced more code and more business value than I ever have before. Is it perfect? No, but it is clear that people who know how to use the tools can produce a lot more value than those who don’t.

Areas that rely heavily on the generation of documents are not nearly as far along in their journey, and, according to this analysis, will not see quite the theoretical maximum impact as the more technical areas. This aligns with my personal experience as well. AI is better at summarizing documents and editing them than at creating them. I don’t write documents with AI, but I regularly use AI to review my work, suggest where the document might be confusing for readers, etc.

And finally, areas that are heavily based on physical work have the least impact now and the least theoretical impact. If I’m mowing the lawn, an LLM isn’t going to help me, but it can be valuable when I’m trying to figure out how to repair a broken mower.

As with almost all hot issues, there is more to this story than “AI is dumb. Look at this ridiculous output!” and “AI is going to solve all problems.” AI for software developers hit an inflection point at the end of November last year when two key models were released (Opus 4.5 and GPT 5.1). My work life changed almost overnight. That hasn’t happened in a lot of other areas yet, and a lot of people are judging “AI” by what they can get for free and applying it to areas where it’s not a great fit. That doesn’t make it a fad. It just means AI isn’t growing at the same pace in every field, and it isn’t equally useful in every industry.